Gotchas and Tricks

This chapter will discuss some of the often made beginner mistakes as well as a few tricks to improve performance.

The example_files directory has all the files used in the examples.

Shell quoting

Always use single quotes for the search pattern, unless other forms of shell expansion is needed and you really know what you are doing.

# space is a shell metacharacter for separating command arguments

$ echo 'a cat and a dog' | grep and a

grep: a: No such file or directory

$ echo 'a cat and a dog' | grep 'and a'

a cat and a dog

# use of # indicates the start of a comment

$ printf 'apple\na#2\nb#3\n' | grep #2

Usage: grep [OPTION]... PATTERNS [FILE]...

Try 'grep --help' for more information.

$ printf 'apple\na#2\nb#3\n' | grep '#2'

a#2

# unquoted *.txt will get expanded to filenames ending with .txt

$ echo 'files *.txt' | grep -F *.txt

$ echo 'files *.txt' | grep -F '*.txt'

files *.txt

When double quotes are needed, use them only for the portion required. See mywiki.wooledge Quotes for detailed discussion of various quoting mechanisms and expansions.

$ f='apple'

# ! is special within double quotes and can lead to errors

$ printf '!fruit=apple\n!fruit=pear' | grep "!fruit=$f"

bash: !fruit=: event not found

# use double quotes only where required and single quotes for everything else

$ printf '!fruit=apple\n!fruit=pear' | grep '!fruit='"$f"

!fruit=apple

Patterns starting with hyphen

Patterns cannot start with - as it will be treated as a command line option. Either escape it or use -- as an option before the pattern to indicate that no more options will be used (especially handy if pattern is programmatically constructed). Note this problem and the solution is not unique to the grep command.

# command assumes - is start of an option, hence the errors

$ printf '-2+3=1\n'

bash: printf: -2: invalid option

printf: usage: printf [-v var] format [arguments]

$ echo '5*3-2=13' | grep '-2'

Usage: grep [OPTION]... PATTERNS [FILE]...

Try 'grep --help' for more information.

# escape it (won't work if -F option is also needed)

$ echo '5*3-2=13' | grep '\-2'

5*3-2=13

# or use --

$ echo '5*3-2=13' | grep -- '-2'

5*3-2=13

$ printf -- '-2+3=1\n'

-2+3=1

As a corollary, you can use options even after filename arguments. This is useful if you forgot some option(s) and want to edit the previous command from the history.

# no output since + is not a metacharacter with default BRE

$ printf 'boat\nsite\nfoot' | grep -o '[aeo]+t'

# use up arrow to bring the previous command and add -E at the end

$ printf 'boat\nsite\nfoot' | grep -o '[aeo]+t' -E

oat

oot

Word boundary differences

The -w option is not exactly the same as using word boundaries in regular expressions. The \b anchor by definition requires word characters to be present, but this is not the case with -w as described in the manual:

-w, --word-regexp Select only those lines containing matches that form whole words. The test is that the matching substring must either be at the beginning of the line, or preceded by a non-word constituent character. Similarly, it must be either at the end of the line or followed by a non-word constituent character. Word-constituent characters are letters, digits, and the underscore. This option has no effect if -x is also specified.

# no output because there are no word characters

$ echo '*$' | grep '\b\$\b'

# matches because $ is preceded by a non-word character

# and followed by the end of the line

$ echo '*$' | grep -w '\$'

*$

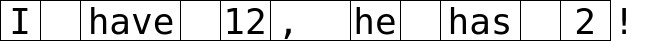

Consider I have 12, he has 2! as a sample text, shown below as image with vertical bars as word boundaries. The last character ! doesn't have the end of word boundary as it is not a word character. This should make the differences between using \b and -w and \<\> features clear.

# \b matches both the start and end of word boundaries

# 1st and 3rd results have space as the second character

$ echo 'I have 12, he has 2!' | grep -o '\b..\b'

I

12

,

he

2

# \< and \> strictly matches only the start and end word boundaries

$ echo 'I have 12, he has 2!' | grep -o '\<..\>'

12

he

# -w ensures there are no word characters around the matching text

# same as: grep -oP '(?<!\w)..(?!\w)'

$ echo 'I have 12, he has 2!' | grep -ow '..'

12

he

2!

Faster execution for ASCII input

Changing locale to ASCII (assuming that the default is not ASCII) can give a significant speed boost.

# time shown is best result from multiple runs

# speed benefit will vary depending on computing resources, input, etc

$ time grep -xE '([a-d][r-z]){3}' words.txt > f1

real 0m0.036s

# LC_ALL=C will give ASCII locale, active only for this command

$ time LC_ALL=C grep -xE '([a-d][r-z]){3}' words.txt > f2

real 0m0.008s

# check that results are same for both versions of the command

$ diff -s f1 f2

Files f1 and f2 are identical

Here's another example.

$ time grep -xE '([a-z]..)\1' words.txt > f1

real 0m0.139s

$ time LC_ALL=C grep -xE '([a-z]..)\1' words.txt > f2

real 0m0.082s

# clean up temporary files

$ rm f[12]

There has been plenty of speed improvements in recent versions, see release notes for details. See also this article on

LC_ALL=Cusage, especially when it is not suitable.

Speed benefits with PCRE

Usually, BRE/ERE will perform better than PCRE. But if the search pattern has backreferences, PCRE can turn out to be faster. As mentioned earlier, from man grep under Known Bugs section (applies to BRE/ERE):

Large repetition counts in the {n,m} construct may cause grep to use lots of memory. In addition, certain other obscure regular expressions require exponential time and space, and may cause grep to run out of memory. Back-references are very slow, and may require exponential time.

$ time LC_ALL=C grep -xE '([a-z]..)\1' words.txt > f1

real 0m0.082s

$ time grep -xP '([a-z]..)\1' words.txt > f2

real 0m0.011s

# clean up

$ rm f[12]

Parallel execution

While searching huge code bases, you could consider using more than one processing resource (if available) to speed up the task.

xargs -Poutput might get mangled unless you forcegrepto flush the output every line. Or, you can use theparallelcommand, see unix.stackexchange: xargs vs parallel for more details.

Consider this example dataset:

# note that the download size is 154M

$ wget https://github.com/torvalds/linux/archive/v4.19.tar.gz

$ tar -zxf v4.19.tar.gz

$ du -sh linux-4.19

908M linux-4.19

Here's a comparison between grep -r and using xargs for parallel processing. Also, this illustration assumes that the order of output lines do not matter. Note that this just a single sample, results will vary wildly depending on the search term, processing power available and so on. You can use the nproc command to find out how many processes you can run in parallel (which is four on my machine).

$ cd linux-4.19

# note that the time is significantly different from the first run to next

# due to caching, in this case 0m36.385s to 0m0.301s

$ time grep -rl 'include' . > ../f1

real 0m0.301s

# sometimes find+grep may be faster than grep -r, so try that first

# turns out not the case here though

# also note the use of -print0 and -0 to handle filenames correctly

$ time find -type f -print0 | xargs -r0 grep -l 'include' > ../f2

real 0m0.325s

# much better performance as xargs will use as many processes as possible

# --line-buffered will prevent output mangling

$ time find -type f -print0 | \

> xargs -r0 -P0 grep -l --line-buffered 'include' > ../f3

real 0m0.180s

# check if the outputs are identical

$ diff -sq <(sort ../f1) <(sort ../f2)

Files /dev/fd/63 and /dev/fd/62 are identical

$ diff -sq <(sort ../f1) <(sort ../f3)

Files /dev/fd/63 and /dev/fd/62 are identical

# clean up

$ rm ../f[1-3]

Summary

With this, chapters on GNU grep are done. I would highly suggest you to maintain your own list of frequently used grep commands, tips and tricks, etc.

Next chapter is on ripgrep which has gained immense popularity, mainly due to its speed, recursive options and customization features. Also, do check out the various resources linked in the Further Reading chapter.